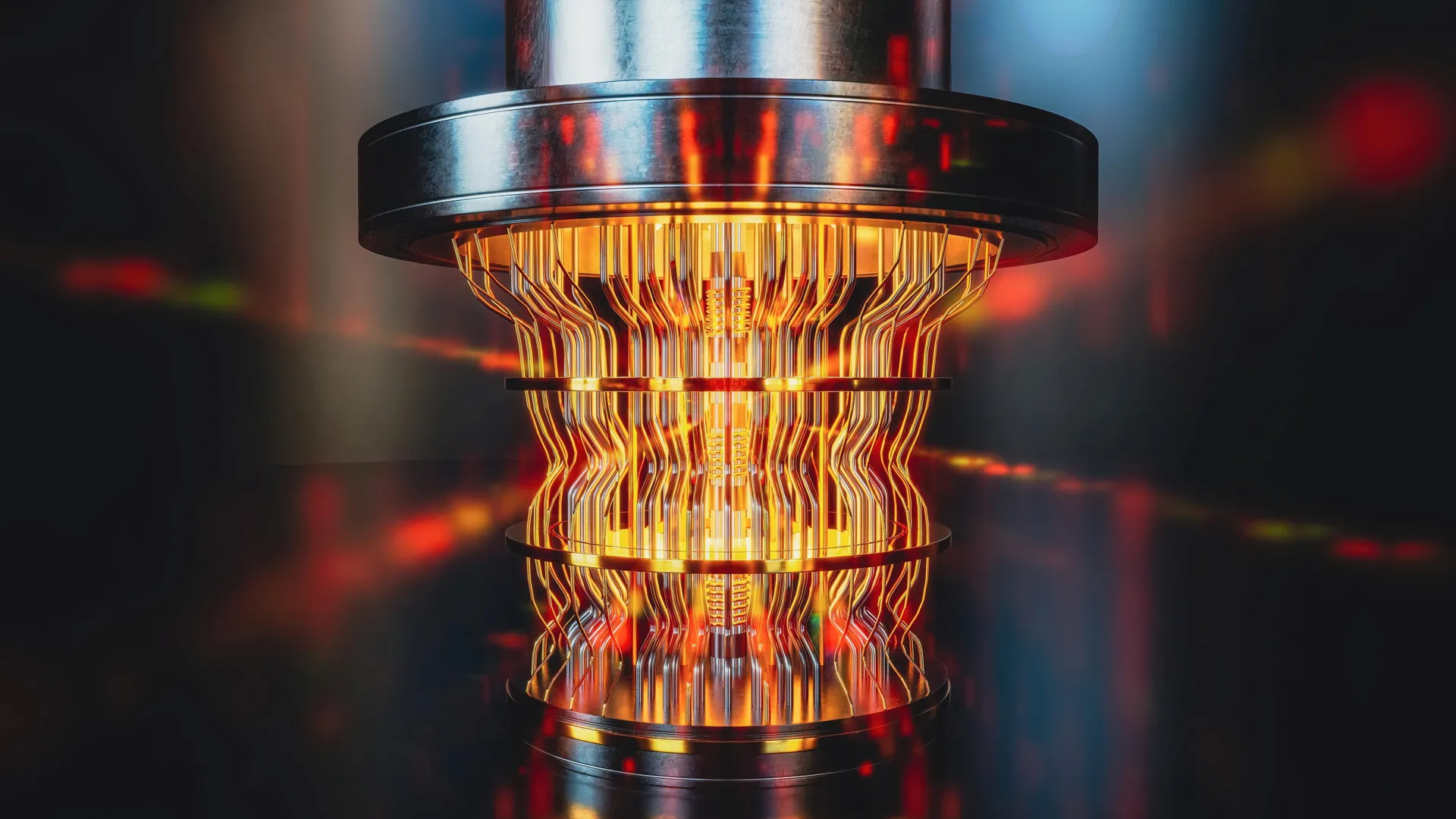

Validating Quantum Computing: New Tools Detect Errors & Ensure Accuracy

Quantum Verification Challenge Gains Urgency as Commercialization Looms

The race to build the world’s first commercially viable quantum computer is intensifying, but a fundamental hurdle is gaining focus: ensuring the accuracy of results from machines capable of calculations far beyond the reach of traditional supercomputers. As investment pours into the sector, the question of verification is no longer a theoretical concern, but a practical impediment to realizing the technology’s vast potential.

The promise of quantum computing extends across numerous sectors, from drug discovery and materials science to financial modeling and cryptography. However, the very power that makes these machines so appealing – their ability to tackle previously intractable problems – also makes it incredibly difficult to confirm their outputs.

“There exists a range of problems that even the world’s fastest supercomputer cannot solve, unless one is willing to wait millions, or even billions, of years for an answer,” explains Alexander Dellios, a Postdoctoral Research Fellow at Swinburne University’s Centre for Quantum Science and Technology Theory. This computational barrier necessitates new methods for validating quantum computations without relying on classical verification.

Decoding the Quantum Output: A New Approach

Researchers at Swinburne University have recently unveiled a novel technique specifically designed to validate the results generated by a particular type of quantum device known as a Gaussian Boson Sampler (GBS). GBS machines utilize photons – particles of light – to perform probability calculations that would take classical supercomputers millennia to complete.

The team’s breakthrough allows for rapid assessment of GBS accuracy, achievable within minutes on a standard laptop. This represents a significant leap forward in the ability to debug and refine these complex systems. Their method doesn’t simply confirm if an answer is produced, but assesses how accurate it is, identifying subtle errors that might otherwise go unnoticed.

To demonstrate the efficacy of their approach, the researchers applied it to a recently published GBS experiment that would require approximately 9,000 years to replicate using existing supercomputing infrastructure. The analysis revealed a discrepancy between the observed probability distribution and the intended target, highlighting previously undetected noise within the experiment.

This discovery isn’t merely an academic exercise. It underscores the critical need for robust validation protocols as quantum computing moves closer to practical application. The next step, according to Dellios, is to determine whether the unexpected distribution is a result of inherent computational difficulty or a consequence of errors that compromise the device’s “quantumness” – its ability to leverage quantum mechanical phenomena.

The Economic Stakes: A $65 Billion Market by 2030

The development of reliable quantum computers is attracting substantial investment. A recent report by MarketsandMarkets projects the global quantum computing market to reach $65.6 billion by 2030, growing at a compound annual growth rate (CAGR) of 35.6% from 2023. This explosive growth is fueled by both public and private sector funding, with governments worldwide recognizing the strategic importance of quantum technology.

The United States, for example, has allocated significant resources to quantum research through initiatives like the National Quantum Initiative Act. Similar programs are underway in the European Union, China, and other nations.

However, the economic benefits won’t materialize without addressing the verification challenge. Errors in quantum computations could lead to flawed results in critical applications, undermining trust and hindering adoption. This is particularly concerning in sectors like finance, where even minor inaccuracies can have significant financial consequences.

Regulatory Scrutiny and the Need for Standards

As quantum computing matures, regulatory bodies are beginning to consider the implications for data security and algorithmic fairness. The potential for quantum computers to break existing encryption algorithms – a threat known as the “quantum apocalypse” – is driving efforts to develop post-quantum cryptography standards.

The National Institute of Standards and Technology (NIST) is currently leading the charge in developing these new standards, which will be crucial for protecting sensitive data in a post-quantum world. The verification of quantum algorithms will be essential to ensure that these new cryptographic methods are truly secure.

Furthermore, the Organisation for Economic Co-operation and Development (OECD) is actively examining the ethical and societal implications of quantum technology, including the need for responsible innovation and international cooperation.

Beyond Verification: Maintaining ‘Quantumness’

Dellios emphasizes that validation is only one piece of the puzzle. “Developing large-scale, error-free quantum computers is a herculean task… A vital component of this task is scalable methods of validating quantum computers, which increase our understanding of what errors are affecting these systems and how to correct for them, ensuring they retain their ‘quantumness’.”

Maintaining “quantumness” – the delicate state of quantum superposition and entanglement that enables these machines to perform unique calculations – is a constant battle against environmental noise and imperfections in hardware. The ability to accurately diagnose and correct errors is paramount to building stable and reliable quantum computers.

According to the World Bank, global investment in research and development reached $2.6 trillion in 2021, highlighting the global commitment to technological advancement. A significant portion of this investment is now flowing into quantum computing, driven by the potential for transformative economic and societal impact. The ongoing work at Swinburne University, and similar efforts around the globe, are crucial steps towards unlocking that potential.